Back

DK

•

Ride • 1y

More like this

Recommendations from Medial

Bhoop Singh Gurjar

AI Deep Explorer | f... • 11m

Want to learn AI the right way in 2025? Don’t just take courses. Don’t just build toy projects. Look at what’s actually being used in the real world. The most practical way to really learn AI today is to follow the models that are shaping the indus

See MoreSiddharth K Nair

Thatmoonemojiguy 🌝 • 10m

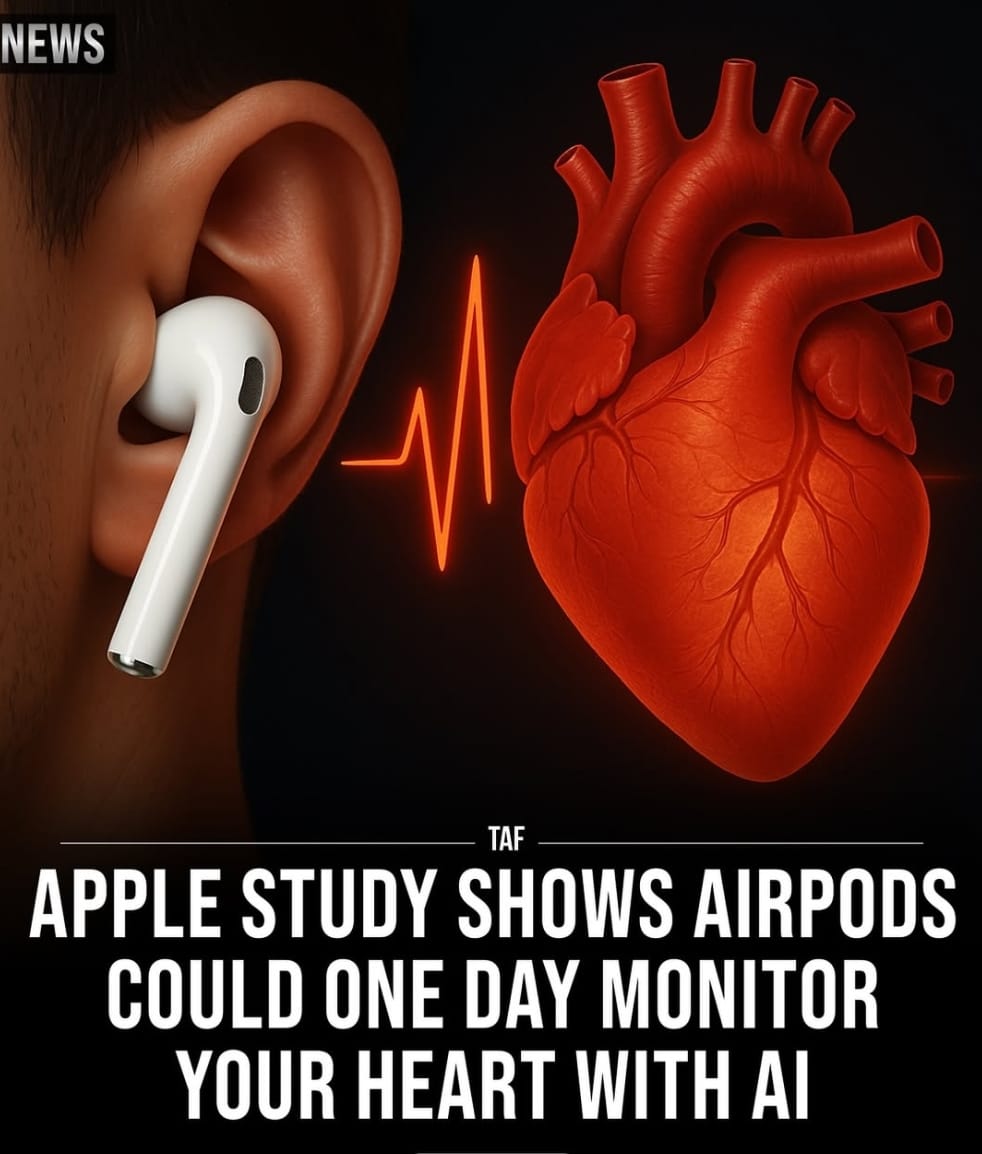

Apple 🍎 is planning to integrate Al-powered heart rate monitoring into AirPods 🎧 Apple's newest research suggests that AirPods could soon double as Al-powered heart monitors. In a study published on May 29, 2025, Apple's team tested six advanced A

See More

Parampreet Singh

Python Developer 💻 ... • 1y

3B LLM outperforms 405B LLM 🤯 Similarly, a 7B LLM outperforms OpenAI o1 & DeepSeek-R1 🤯 🤯 LLM: llama 3 Datasets: MATH-500 & AIME-2024 This has done on research with compute optimal Test-Time Scaling (TTS). Recently, OpenAI o1 shows that Test-

See More

EazyDiner India

Eat Out & Save More ... • 8m

Ab's Absolute Barbecues Kalyan Nagar Bangalore Craving something smoky, spicy, and absolutely delicious? EazyDiner recommends AB’s Absolute Barbecues Kalyan Nagar Bangalore - a vibrant buffet restaurant where grills, global flavors, and great times

See MoreAccount Deleted

Hey I am on Medial • 9m

my favourite websites for web design inspirations 👇 - https://Supahero.io - https://Seesaw.website - https://Navbar.gallery - https://Darkmodedesign.com - https://Designspells.com - https://Cofolios.com - https://Footer.design - https://Maxibestof.

See MoreTarun Suthar

•

The Institute of Chartered Accountants of India • 10m

How to Conduct a Comprehensive Market Analysis 📊 1️⃣ Identify Key Sectors and Estimate Market Size 🎯 Understand the overall opportunity, major players, and sector contributions https://investindia.gov.in https://ibef.org https://dpiit.gov.in http

See More

Download the medial app to read full posts, comements and news.

/entrackr/media/post_attachments/wp-content/uploads/2021/08/Accel-1.jpg)