Back

More like this

Recommendations from Medial

Jainil Prajapati

Turning dreams into ... • 1y

India should focus on fine-tuning existing AI models and building applications rather than investing heavily in foundational models or AI chips, says Groq CEO Jonathan Ross. Is this the right strategy for India to lead in AI innovation? Thoughts?

AI Engineer

AI Deep Explorer | f... • 11m

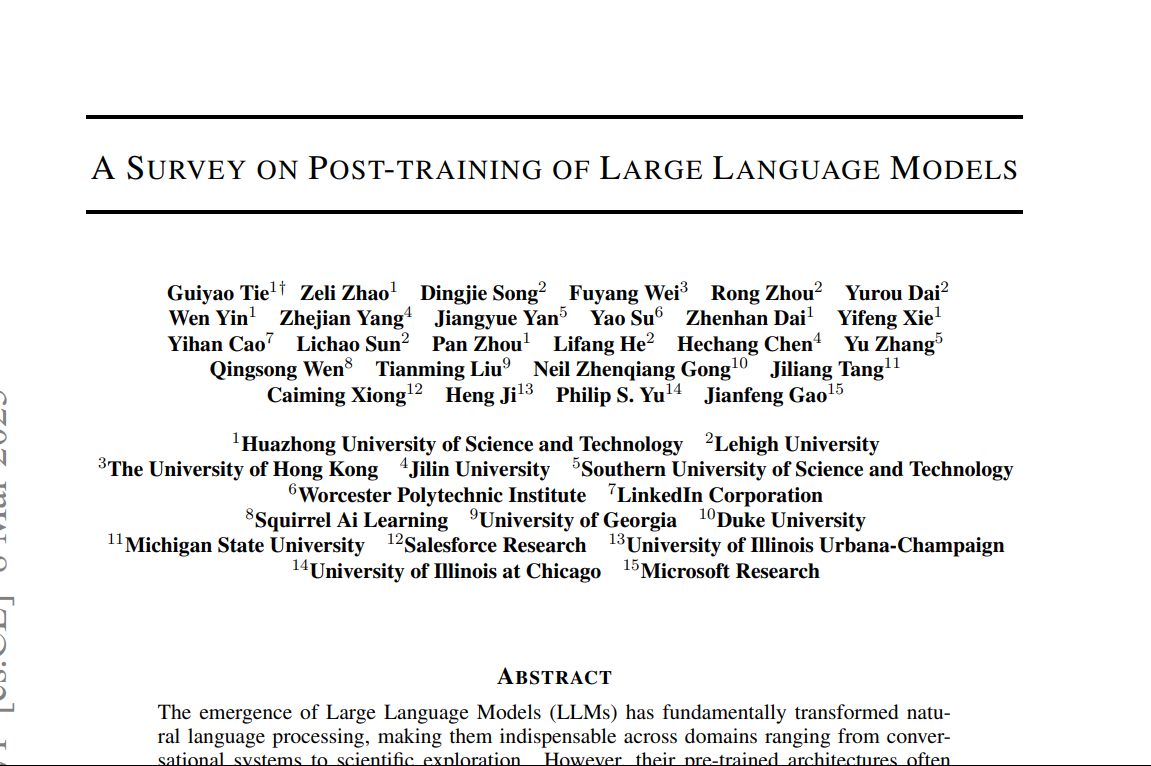

"A Survey on Post-Training of Large Language Models" This paper systematically categorizes post-training into five major paradigms: 1. Fine-Tuning 2. Alignment 3. Reasoning Enhancement 4. Efficiency Optimization 5. Integration & Adaptation 1️⃣ Fin

See More

Mohammed Zaid

building hatchup.ai • 8m

OpenAI researchers have discovered hidden features within AI models that correspond to misaligned "personas," revealing that fine-tuning models on incorrect information in one area can trigger broader unethical behaviors through what they call "emerg

See More

Nikhil Raj Singh

Entrepreneur | Build... • 6m

Hiring AI/ML Engineer 🚀 Join us to shape the future of AI. Work hands-on with LLMs, transformers, and cutting-edge architectures. Drive breakthroughs in model training, fine-tuning, and deployment that directly influence product and research outcom

See MoreVansh Khandelwal

Full Stack Web Devel... • 2m

Agentic AI—systems that act autonomously, are context-aware and learn in real time—paired with small language models (SLMs) enables efficient, specialized automation. SLMs are lightweight, fast, fine-tunable for niche domains and accessible to smalle

See MoreAI Engineer

AI Deep Explorer | f... • 11m

LLM Post-Training: A Deep Dive into Reasoning LLMs This survey paper provides an in-depth examination of post-training methodologies in Large Language Models (LLMs) focusing on improving reasoning capabilities. While LLMs achieve strong performance

See MoreDownload the medial app to read full posts, comements and news.

/entrackr/media/post_attachments/wp-content/uploads/2021/08/Accel-1.jpg)