Back

Tanuj Sharma

Python Developer | A... • 1y

Hi, It is trained on more bigger dataset and with more gpu hours so its not fine tuned on earlier versions, its trained with more parameters than the earliest versions, if you have heard its training cost is around 50-100M $, while if you see thier latest offering o3, its training cost till now around 1-1.4B $, while training cost for chat gpt initial version was only around ~10M $, but as per the latest rumours if you heard they already have exhausted all available data on internet in training thier models till now, so future mai its going to be very hard for them to train their models on new data, then it would be mostly fine tuning on older versions with synthetic datasets.

More like this

Recommendations from Medial

Account Deleted

Hey I am on Medial • 10m

LATEST 🚨: 1. Alibaba launches ZeroSearch, that cuts AI search model training cost by up to 88% 2. It simulates search results without needing real time API calls to services like Google 3. Using Google’s SerpAPI, 64000 queries cost $480, but ZeroS

See More

Bhoop Singh Gurjar

AI Deep Explorer | f... • 11m

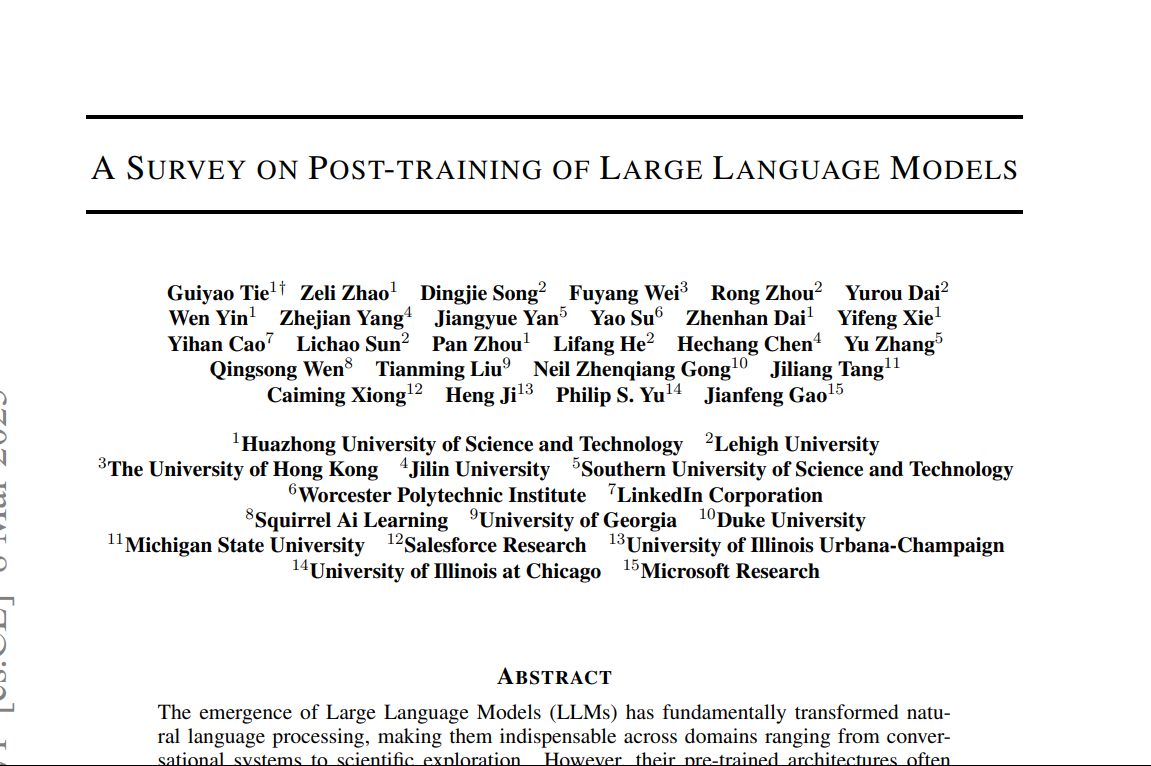

"A Survey on Post-Training of Large Language Models" This paper systematically categorizes post-training into five major paradigms: 1. Fine-Tuning 2. Alignment 3. Reasoning Enhancement 4. Efficiency Optimization 5. Integration & Adaptation 1️⃣ Fin

See More

Animesh Kumar Singh

Hey I am on Medial • 8m

The "AI Personality Forge: Product :"Cognate" Cognate is not an AI assistant. It's a platform on your phone or computer that lets you forge, train, and deploy dozens of tiny, specialized AI "personalities" for specific tasks in seconds. How it wo

See MoreNikhil Raj Singh

Entrepreneur | Build... • 6m

Hiring AI/ML Engineer 🚀 Join us to shape the future of AI. Work hands-on with LLMs, transformers, and cutting-edge architectures. Drive breakthroughs in model training, fine-tuning, and deployment that directly influence product and research outcom

See MoreDownload the medial app to read full posts, comements and news.

/entrackr/media/post_attachments/wp-content/uploads/2021/08/Accel-1.jpg)