Back

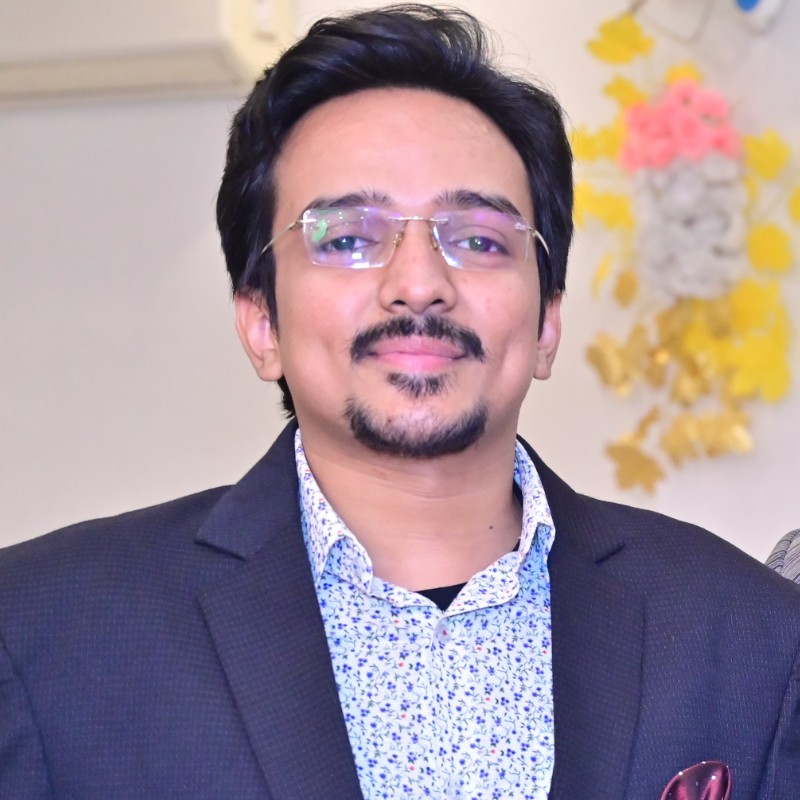

Aman Tiwari

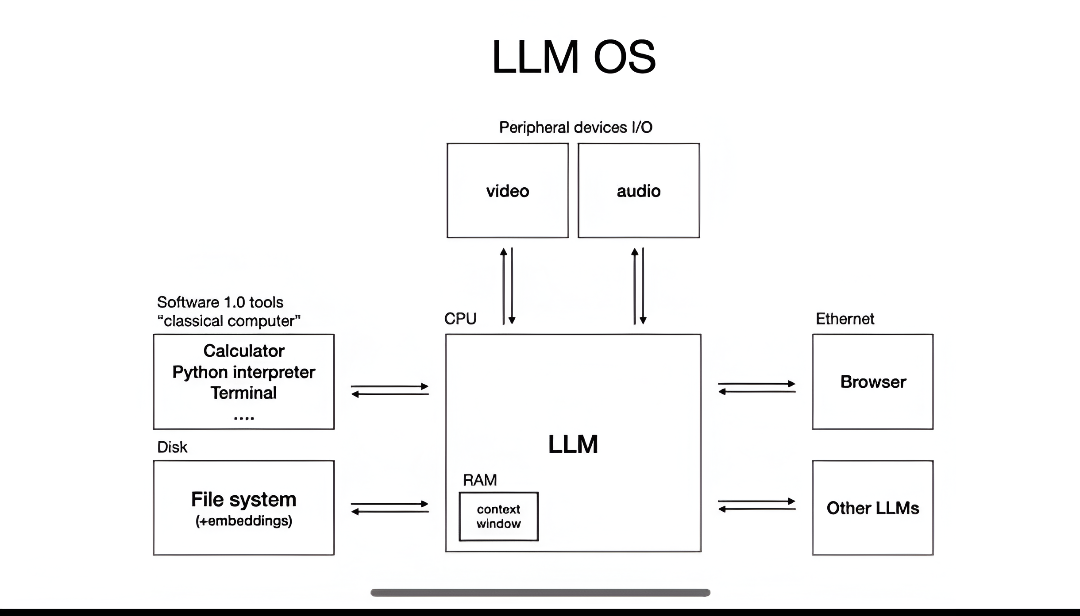

Founder | Kalika OS • 1m

🤔I am confused in one thing? should I integrate my LLM directry into the architecture of my OS or should I launch it separately and connect the OS infra via MCP servers? every suggestions are respected here...🫡

Replies (2)

More like this

Recommendations from Medial

Techiral

Why do it when AI ca... • 1m

Should I directly upload the OS online for everyone, or make it more practical then launch? As in less than half a day, I was successful in building the whole OS with AI, but I think if I invest my time for more practicality it will be better what a

See MoreSrivatsan Sreedaran

•

Boeing • 1y

I was just going through some AI news and damn ..... Snowflake Arctic, an enterprise-grade LLM that delivers top-tier performance in SQL generation, coding, and instruction-following benchmarks at a fraction of traditional costs!!! I also read that

See MoreDownload the medial app to read full posts, comements and news.